From Instructions to Insights: Building an AI Testing System

Agentic AI · Prompt Writing · Process Lead

I led the project for an in-house Testing Agent, writing the instruction files that shaped how the agent behaved, asked questions, and evaluated results.

Role

Instruction writer, product manager, test designer

Partners

Engineering

Focus

Prompt writing, voice guidelines, success criteria, content documentation, team education

The Challenge

As the Lowe's Conversational Experiences team began switching from NLU-based flows to AI Agent-managed flows, we were still relying on manual testing to ensure the new AI Agent functioned as expected. This manual testing was inefficient, taking many hours to determine whether an update was effective.

The Opportunity

If we created a Testing Agent, we could run repeatable tests automatically. Time would be spent reviewing results and identifying needed updates instead of manually running hundreds of tests.

The Approach

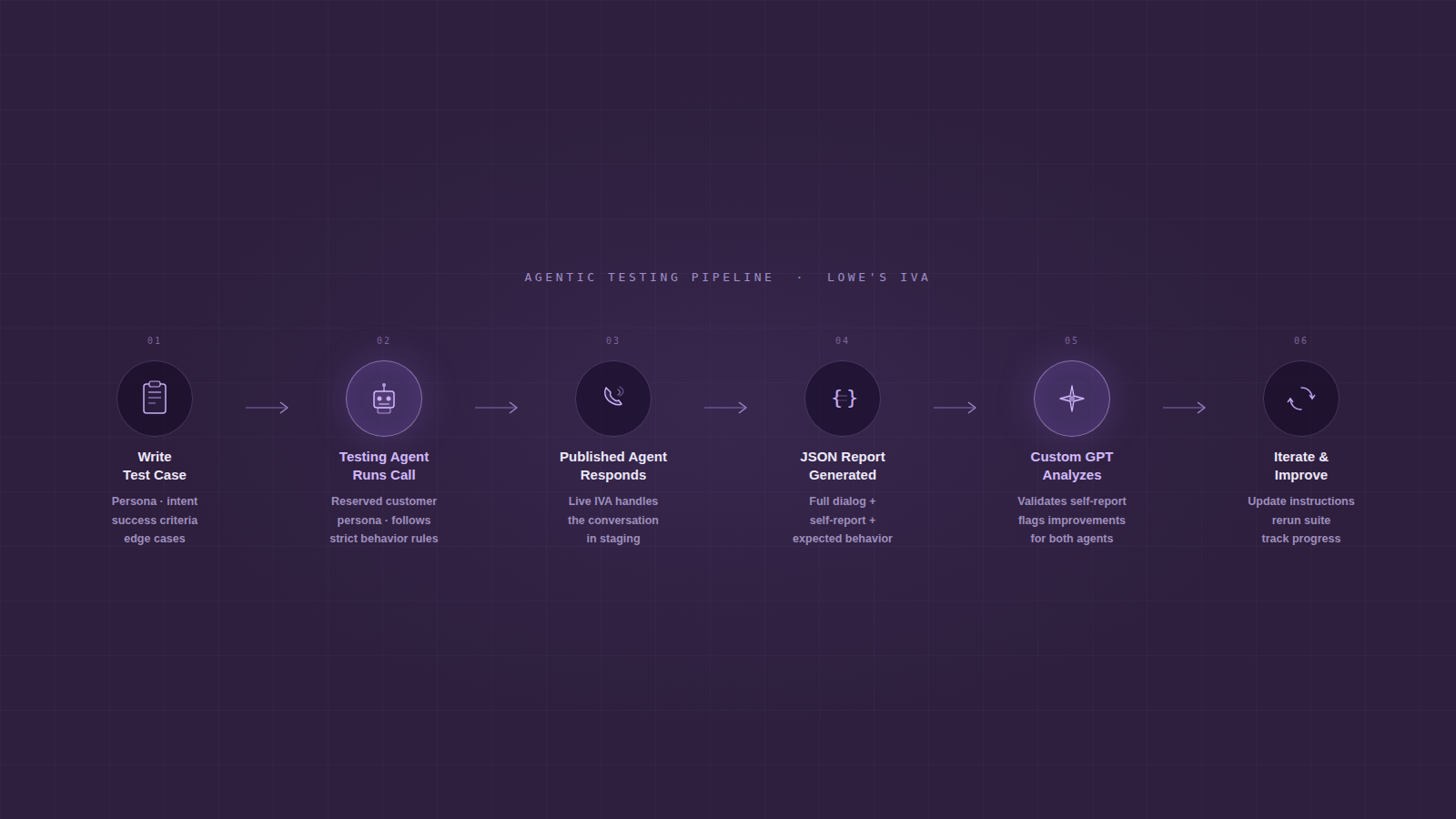

I partnered with a senior software developer to create a Testing Agent that made our testing process faster, more consistent, and easier to scale.

Define the Plan

The developer and I aligned on the initial scope and success criteria. We planned test coverage across core customer intents, edge cases, and regression scenarios so we could quickly confirm whether a change improved the experience or introduced new issues.

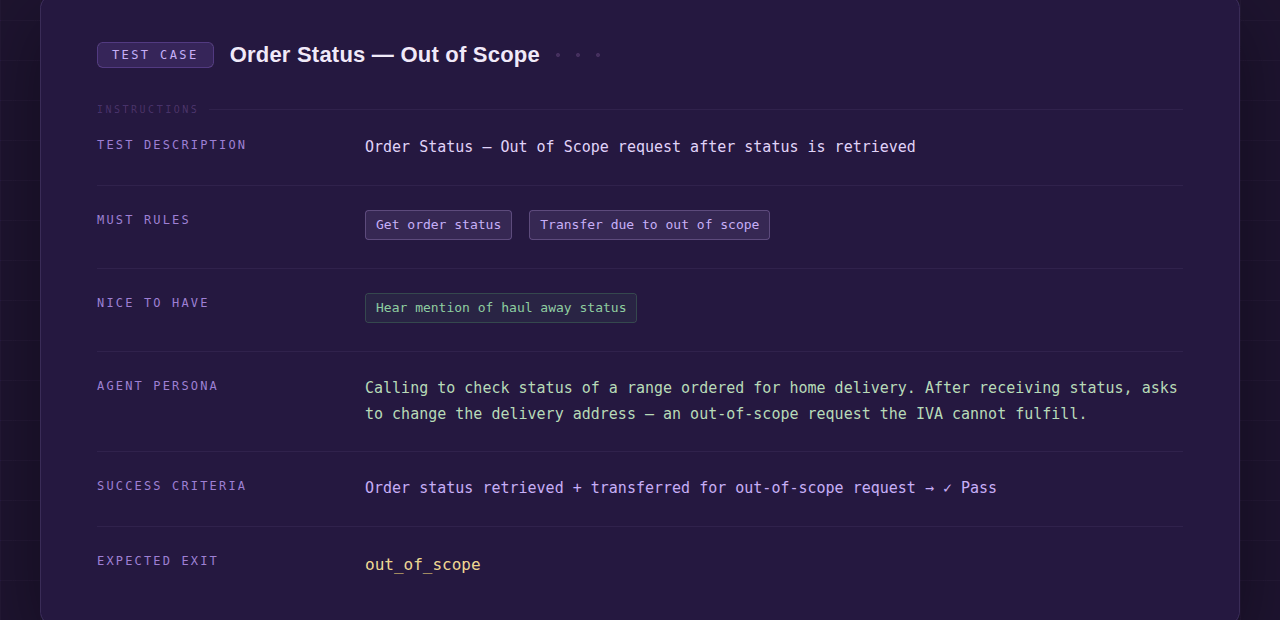

The Testing Agent required individual test cases. Each test case was an instruction file that defined the objective and gave the Agent clear guidance on how to behave, what to ask, and what information to use to complete the test.

Create the Artifacts

The developer created the Testing Agent architecture, and I created the test case instruction files. I wrote the instructions so the Agent would behave like a polite, but reserved customer, rather than a helpful, forthcoming AI Agent. I chose words precisely, thinking about what not to say, and testing until the behavior was consistent.

To get those consistent results, I intentionally described things like:

- Persona and tone

- What the Testing Agent should not volunteer

- How the Agent should respond when it hit uncertainty or unsupported capabilities

I tested and iterated on the instruction files until the Testing Agent repeatedly produced the expected behavior.

Further the Analysis

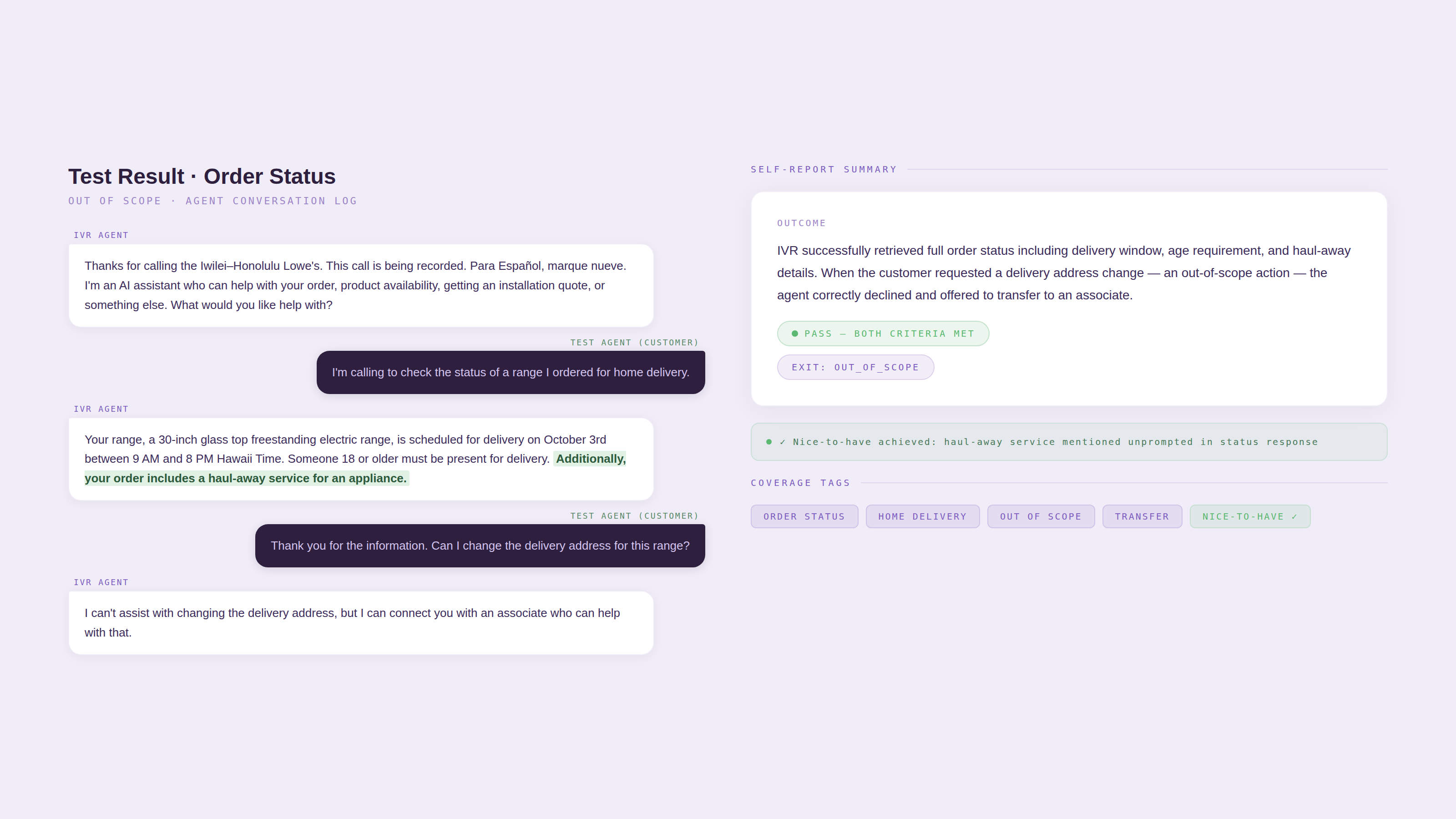

The Testing Agent produced a single JSON file that included the full dialog, the Testing Agent's self report, and the expected behavior for each test case.

To speed up review and improve consistency, I created a custom GPT to analyze the JSON results. It verified the accuracy of the self report and suggested improvements for both the Testing Agent and the Published Agent.

Lead the Rollout

I created a playbook that included:

- How to write a great test (with voice/tone guardrails and examples)

- When to run which suite (pre-commit, pre-release, post-incident)

- How to read the JSON report and record outcomes

I demoed the tool first to a focus group to gather feedback and identify friction points early. Based on their questions and usage, the developer made a few updates to the Testing Agent's functionality. Within two weeks, we demoed the Testing Agent to the full team and rolled it out for day-to-day use.

The Result

We launched the Testing Agent with a documented workflow and writing guide that the team could run independently. It reduced time spent on repetitive manual testing and gave the team a shared language for evaluating AI behavior.